Background

In this post I want to share with you the possibilities to remove problematic ESXi based Transport Nodes running NSX-T 2.4+, 3.x and 4.x manually from NSX Manager. Especially including the nodes NSX configuration and accompanying VIBs.

To be clear, this should be your last resort option if the regular procedures to remove ESXi based Transport Nodes from the NSX Manager do not work anymore. In production environments I would even suggest to perform these actions only if asked by VMware Tech Support.

I started this post because of issues I stumbled upon when upgrading NSX-T in my homelab. The base of this post are some articles written by fellow VMware community members that really helped troubleshooting the issues. So credits to the original authors: Wesley Geelhoed in his post about “Uninstall sequence NSX-T VIBs (2.2)” and a post by Manny Sidhu about “NSX-T Error – Failed to uninstall the software on host“, including the useful reply by Ruurd Bakker.

The post by Wesley and the NSX-T documentation page are both based on the 2.2 version. Some of the VIB modules in the NSX-T 2.5, 3.x and 4.x versions have been changed.

So, a new post that puts it all together is needed from my perspective.

Update 19-4-2021

- Post updated for NSX-T 3.x

- Updated UI options and CLI output

- Updated VIB list / de-install order

Update 30-4-2023

- Post updated for NSX-T 4.x

- Updated ‘nsx del’ warning

- Updated VIB list / de-install order

- Updated documentation links

The issue

In my homelab I have a 4-node nested ESXi cluster, that ran NSX-T 2.4.2 on top of it, which was fine before the upgrade. After 2.5 was released a while ago, it was time to upgrade. After upgrading to 2.5 GA version, one host had issues. The culprit host did not join the Transport Zone anymore and also could not connect to the NSX Manager. Probably a result from me not checking the warnings in the Pre-Check stage during the Transport Node upgrade because it’s “just” a home lab :-).

In hindsight probably the root volume and /tmp mountpoint of the ESXi host did not have sufficient free space. During troubleshooting I did not get NSX to function properly anymore on that host. To reset the configuration, even the option “Remove NSX” in the NSX Manager > System > Fabric > Nodes > “Host Transport Node” menu failed.

My last resort was to remove all the NSX related configuration and modules from the culprit host. During my search how to, I stumbled upon a couple of articles mentioned above. I will describe all options in this post. All the options only apply to ESXi based Transport Nodes running NSX-T 2.4/5 and 3.x.

Solutions

To solve the issue, a couple of solutions are available. If possible put the node to be removed in “Maintenance Mode”, move the VM’s and reboot after performing one of the solutions.

The best order to manually remove Transport Nodes is shown below. Start with the simplest solution, down to the more complex and rigorous one using ESXi built-in tools.

- Using NSX Manager

- Using NSXCLI

- Using ESXi native tools

Pay special attention in the case you have nodes with only 2 NIC’s. In this case you only have one N-VDS with all VMkernel ports connected to it. When you perform one of the solutions below on such nodes, all network connectivity will stop functioning. Connect to the console of the node first. In the nodes DCUI you can reset the network config to default and restore management connectivity to the node.

Using NSX Manager

If issues arise on ESXi based Transport Node (TN) general troubleshooting is the first step towards a solution. The NSX-T 3.x Installation Guide can be helpful.

The install guide also mentions:

If NSX Intelligence is also deployed on the host, uninstallation of NSX-T Data Center will fail because all transport nodes become part of a default network security group. To successfully uninstall NSX-T Data Center, you also need to select the Force Delete option before proceeding with uninstallation.

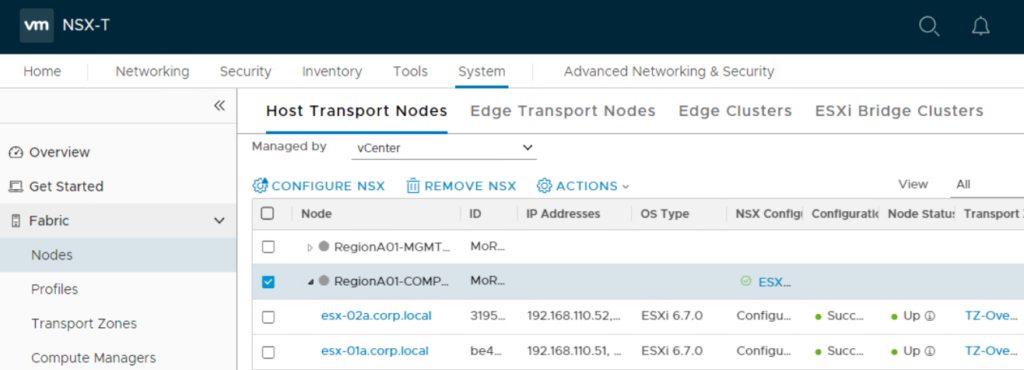

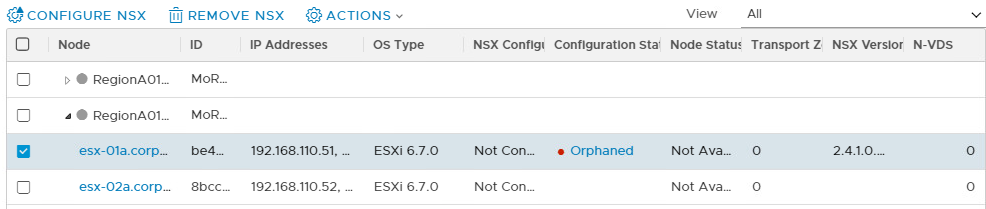

The regular solution to remove NSX from a specific TN is to use the “Remove NSX” option in NSX Manager. The “Remove NSX” option can be found in NSX Manager > System > Fabric > Nodes > “Host Transport Node” menu.

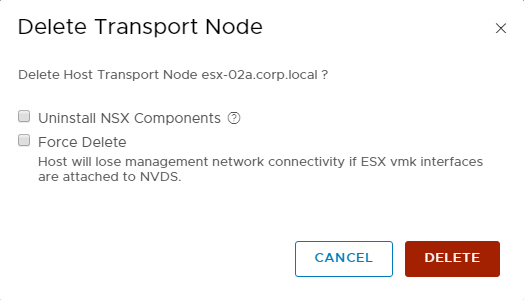

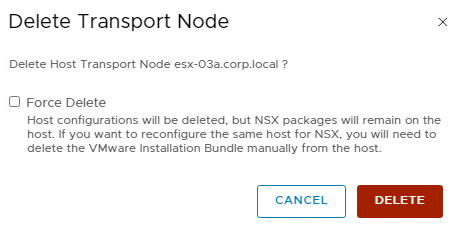

The “Remove NSX” option is only available if a “Transport Node Profile” is not attached to the cluster the node belongs to. More to that in the next section “Transport Node Profiles”. When selecting the “Remove NSX” option, the “Delete Transport Node” screen appears and has 2 options.

- Uninstall NSX Components (NSX-T 2.x only)

- Force Delete

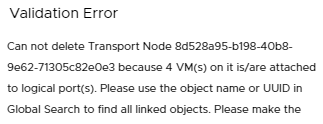

When nothing is checked, it will try to remove the TN configuration in a safe way in NSX Manager. If the node is reachable over the management interface it also clears the node local config, but will not de-install the VIBs. An error is thrown if VM’s are still attached to the N-VDS or VDS.

When selecting “Uninstall NSX Components” only (NSX-T 2.x), it will try to remove the TN configuration in a safe way in NSX Manager. If the node is reachable over the management interface it also clears the node local config and will de-install the VIBs. An error is thrown if VM’s are still attached to the N-VDS.

When selecting “Force Delete” only, it will forcefully remove the TN configuration and TZ participation in NSX Manager while leaving the faulty hosts as-is. So, no VIBs being de-installed. This option is very useful when the node cannot be recovered anymore and / or is not reachable over the management interface. If the node gets into the “Orphaned” state, the command needs to run twice before the TN config is fully cleaned up in NSX Manager.

When selecting both (NSX-T 2.x), it will forcefully remove the TN configuration in NSX Manager. If the node is reachable over the management interface it also clears the node local config and will de-install the VIBs, even if VM’s are still attached to the N-VDS. In most cases you would not need both options to be selected before remove a faulty TN.

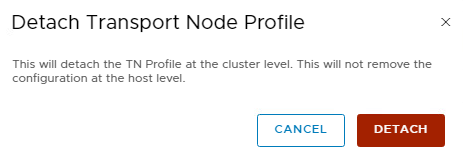

Transport Node Profiles

When a “Transport Node Profile” is attached to the cluster the faulty node resides in, the “Remove NSX” option is not available for that specific node, but only for the whole cluster. In this case use the “Detach Transport Node Profile” option in the “Actions” menu, for the cluster the faulty node is in.

When detaching the “Transport Node Profile” from a cluster it has no impact on data plane traffic within the cluster. Do not confuse the Detach option with the “Remove NSX” option, which will remove the configuration and VIBs from all nodes in the cluster!

Using NSXCLI

To remove a faulty TN, follow the general steps in the NSX-T 3.x Installation Guide which mostly still applies to the 2.5+ versions. If all the NSX-T modules are still present on the node, this method should be your preferred one over using the ESXi native tools in the next section.

On the NSX Manager get the thumbprint.

manager> get certificate api thumbprint

Detach the Transport Node from NSX Manager if possible.

[root@esxi-01a:~] nsxcli host> detach management-plane <MANAGER> username <ADMIN-USER> password <ADMIN-PASSWORD> thumbprint <MANAGER-THUMBPRINT>

Remove NSX-T filters. If the command is run without the -Override switch an error is displayed first.

[root@esxi-01a:~] vsipioctl clearallfilters ERROR: Command clearallfilters is dangerous and can cause unintended consequence! ERROR: Please supply first option -Override in order to override the safety guard and actually run the command.

[root@esxi-01a:~] vsipioctl clearallfilters -Override Removing all vmware-sfw filters... Cleared dvfilter include table. Updated all VMs to remove filters. Destroyed all disconnected filters (please ignore 'Function not implemented' error if there is).

Stop the netcpa service (NSX-T 2.x).

[root@esxi-01a:~] /etc/init.d/netcpad stop

Remove all NSX-T VIBs, relevant config and reboot the host afterwards. In NSX-T 2.4 the “del nsx” command is executed without warning, in contrary to 2.5+ which displays a warning. The warning below is based on 4.x, but mainly the same as the older ones.

[root@esxi-01a:~] nsxcli

esxi-01a> del nsx

*************** STOP STOP STOP STOP STOP ***************

Carefully read the requirements and limitations of this command:

1. Read NSX documentation for 'Remove a Host from NSX or Uninstall NSX Completely'.

2. Deletion of this Transport Node from the NSX UI or API failed, and this is the last resort.

3. If this is an ESXi host:

a. The host must be in maintenance mode.

b. All resources attached to NSXPGs must be moved out.

If the above conditions for ESXi hosts are not met, the command WILL fail.

4. If this is a Linux host:

a. If KVM is managing VM tenants then shut them down before running this command.

b. This command should be run from the host console and may fail if run from an SSH client

or any other network based shell client.

c. The 'nsxcli -c del nsx' form of this command is not supported

5. If this is a Windows host:

NOTE: This will completely remove all NSX instances (image and config) from the host.

6. For command progress check /scratch/log/nsxcli.log on ESXi host or /var/log/nsxcli.log on non-ESXi host.

Are you sure you want to remove NSX on this host? (yes/no)

If the faulty node is still in a configured state in NSX Manager, perform the “Remove NSX” option (described in the previous section) with the “Force Delete” option selected. This will clean up its configuration in NSX Manager.

Using ESXCLI

When one of the above options is not possible due to whatever reason, a manual cleanup could be needed. A manual cleanup can be performed using standard ESXi based tools if the ESXi bootbank is still okay.

The config that is normally removed by NSX Manager or NSXCLI (besides the VIBs) is:

- The NSX VMkernel ports (vxlan and hyperbus)

- The NSX network IO filters

- The NSX IP stacks (vxlan and hyperbus)

So, how to perform a manual cleanup. First try if the network IO filters can be removed. See the previous section how to perform this action.

Secondly delete the NSX VMkernel ports. Normally vmk10 is the vxlan (despite the name its actually the GENEVE overlay) kernel port and vmk50 is the hyperbus kernel port.

You might think, what is the hyperbus? The hyperbus is the component that performs the actual network auto-plumbing. In the image below this is the Intra T0/1 routing (#4 in the image) between the Distributed Router (DR) and Service Router (SR) components (169.254.0.0 subnet) and the Inter T0 /T1 routing (#3 in the image) between T1 SR and T0 DR (100.64.0.0 subnet).

List the kernel ports.

[root@esxi-01a:~] esxcli network ip interface list

…

vmk10

Name: vmk10

MAC Address: 00:50:56:ab:cd:ef

Enabled: true

Portset: DvsPortset-1

Portgroup: N/A

Netstack Instance: vxlan

VDS Name: ndvSwitch0

VDS UUID: 19 f4 3b 76 1a 2b 4f 88-b2 bd 67 b3 86 11 49 2d

VDS Port: 10

VDS Connection: 1543783261

Opaque Network ID: N/A

Opaque Network Type: N/A

External ID: N/A

MTU: 1600

TSO MSS: 65535

RXDispQueue Size: 1

Port ID: 67108870

vmk50

Name: vmk50

MAC Address: 00:50:56:ab:cd:ef

Enabled: true

Portset: DvsPortset-1

Portgroup: N/A

Netstack Instance: hyperbus

VDS Name: ndvSwitch0

VDS UUID: 19 f4 3b 76 1a 2b 4f 88-b2 bd 67 b3 86 11 49 2d

VDS Port: 0d02d084-213c-491e-9bb5-1ab895e98b5d

VDS Connection: 1543783261

Opaque Network ID: N/A

Opaque Network Type: N/A

External ID: N/A

MTU: 1500

TSO MSS: 65535

RXDispQueue Size: 1

Port ID: 67108871

Remove the NSX VMkernel ports (could include vmk11)

[root@esxi-01a:~] esxcli network ip interface remove --interface-name=vmk10 [root@esxi-01a:~] esxcli network ip interface remove --interface-name=vmk50

Now list the netstacks

[root@esxi-01a:~] esxcli network ip netstack list

defaultTcpipStack

Key: defaultTcpipStack

Name: defaultTcpipStack

State: 4660

vxlan

Key: vxlan

Name: vxlan

State: 4660

hyperbus

Key: hyperbus

Name: hyperbus

State: 4660

Remove the NSX related netstacks

[root@esxi-01a:~] esxcli network ip netstack remove --netstack=vxlan [root@esxi-01a:~] esxcli network ip netstack remove --netstack=hyperbus

Check if the N-VDS still exists

If the N-VDS still exists it has impact during the removal step of the actual VIBs later in this section.

[root@esxi-01a:~] esxcfg-vswitch -l

The last step is to remove all the NSX related VIBs that still exist on the node. You cannot remove the VIBs in any order since they have dependencies upon each other. First list the VIBs to be removed from the node.

List NSX-T 2.x VIBs

The full list of NSX-T 2.x VIBs normally present on a ESXi based node are.

[root@host:~] esxcli software vib list | grep -E 'nsx|vsipfwlib' nsx-adf 2.5.1.0.0-6.7.15314402 VMware VMwareCertified 2020-01-03 nsx-aggservice 2.5.1.0.0-6.7.15314423 VMware VMwareCertified 2020-01-03 nsx-cli-libs 2.5.1.0.0-6.7.15314375 VMware VMwareCertified 2020-01-03 nsx-common-libs 2.5.1.0.0-6.7.15314375 VMware VMwareCertified 2020-01-03 nsx-context-mux 2.5.1.0.0esx67-15314456 VMware VMwareCertified 2020-01-03 nsx-esx-datapath 2.5.1.0.0-6.7.15314311 VMware VMwareCertified 2020-01-03 nsx-exporter 2.5.1.0.0-6.7.15314423 VMware VMwareCertified 2020-01-03 nsx-host 2.5.1.0.0-6.7.15314289 VMware VMwareCertified 2020-01-03 nsx-metrics-libs 2.5.1.0.0-6.7.15314375 VMware VMwareCertified 2020-01-03 nsx-mpa 2.5.1.0.0-6.7.15314423 VMware VMwareCertified 2020-01-03 nsx-nestdb-libs 2.5.1.0.0-6.7.15314375 VMware VMwareCertified 2020-01-03 nsx-nestdb 2.5.1.0.0-6.7.15314393 VMware VMwareCertified 2020-01-03 nsx-netcpa 2.5.1.0.0-6.7.15314440 VMware VMwareCertified 2020-01-03 nsx-netopa 2.5.1.0.0-6.7.15314363 VMware VMwareCertified 2020-01-03 nsx-opsagent 2.5.1.0.0-6.7.15314423 VMware VMwareCertified 2020-01-03 nsx-platform-client 2.5.1.0.0-6.7.15314423 VMware VMwareCertified 2020-01-03 nsx-profiling-libs 2.5.1.0.0-6.7.15314375 VMware VMwareCertified 2020-01-03 nsx-proxy 2.5.1.0.0-6.7.15314435 VMware VMwareCertified 2020-01-03 nsx-python-gevent 1.1.0-9273114 VMware VMwareCertified 2018-12-02 nsx-python-greenlet 0.4.9-12819723 VMware VMwareCertified 2019-09-20 nsx-python-logging 2.5.1.0.0-6.7.15314402 VMware VMwareCertified 2020-01-03 nsx-python-protobuf 2.6.1-12818951 VMware VMwareCertified 2019-09-20 nsx-rpc-libs 2.5.1.0.0-6.7.15314375 VMware VMwareCertified 2020-01-03 nsx-sfhc 2.5.1.0.0-6.7.15314423 VMware VMwareCertified 2020-01-03 nsx-shared-libs 2.5.1.0.0-6.7.15036308 VMware VMwareCertified 2020-01-03 nsx-upm-libs 2.5.1.0.0-6.7.15314375 VMware VMwareCertified 2020-01-03 nsx-vdpi 2.5.1.0.0-6.7.15314422 VMware VMwareCertified 2020-01-03 nsxcli 2.5.1.0.0-6.7.15314296 VMware VMwareCertified 2020-01-03

List NSX-T 3.x VIBs

NSX-T 3.x has a different set of VIBs. On an ESXi host, they are:

[root@host:~] esxcli software vib list | grep -E 'nsx|vsipfwlib' nsx-adf 3.1.2.0.0-7.0.17883598 VMware VMwareCertified 2021-04-19 nsx-cfgagent 3.1.2.0.0-7.0.17883598 VMware VMwareCertified 2021-04-19 nsx-context-mux 3.1.2.0.0-7.0.17883598 VMware VMwareCertified 2021-04-19 nsx-cpp-libs 3.1.2.0.0-7.0.17883598 VMware VMwareCertified 2021-04-19 nsx-esx-datapath 3.1.2.0.0-7.0.17883598 VMware VMwareCertified 2021-04-19 nsx-exporter 3.1.2.0.0-7.0.17883598 VMware VMwareCertified 2021-04-19 nsx-host 3.1.2.0.0-7.0.17883598 VMware VMwareCertified 2021-04-19 nsx-ids 3.1.2.0.0-7.0.17883598 VMware VMwareCertified 2021-04-19 nsx-monitoring 3.1.2.0.0-7.0.17883598 VMware VMwareCertified 2021-04-19 nsx-mpa 3.1.2.0.0-7.0.17883598 VMware VMwareCertified 2021-04-19 nsx-nestdb 3.1.2.0.0-7.0.17883598 VMware VMwareCertified 2021-04-19 nsx-netopa 3.1.2.0.0-7.0.17883598 VMware VMwareCertified 2021-04-19 nsx-opsagent 3.1.2.0.0-7.0.17883598 VMware VMwareCertified 2021-04-19 nsx-platform-client 3.1.2.0.0-7.0.17883598 VMware VMwareCertified 2021-04-19 nsx-proto2-libs 3.1.2.0.0-7.0.17883598 VMware VMwareCertified 2021-04-19 nsx-proxy 3.1.2.0.0-7.0.17883598 VMware VMwareCertified 2021-04-19 nsx-python-gevent 1.1.0-15366959 VMware VMwareCertified 2021-02-16 nsx-python-greenlet 0.4.14-16723199 VMware VMwareCertified 2021-02-16 nsx-python-logging 3.1.2.0.0-7.0.17883598 VMware VMwareCertified 2021-04-19 nsx-python-protobuf 2.6.1-16723197 VMware VMwareCertified 2021-02-16 nsx-python-utils 3.1.2.0.0-7.0.17883598 VMware VMwareCertified 2021-04-19 nsx-sfhc 3.1.2.0.0-7.0.17883598 VMware VMwareCertified 2021-04-19 nsx-shared-libs 3.1.2.0.0-7.0.17883598 VMware VMwareCertified 2021-04-19 nsx-vdpi 3.1.2.0.0-7.0.17883598 VMware VMwareCertified 2021-04-19 nsxcli 3.1.2.0.0-7.0.17883598 VMware VMwareCertified 2021-04-19 vsipfwlib 3.1.2.0.0-7.0.17883598 VMware VMwareCertified 2021-04-19

List NSX-T 4.x VIBs

NSX-T 4.x has a slightly different set of VIBs towards the end of the list. On a ESXi host, they are:

[root@host:~] esxcli software vib list | grep -E 'nsx|vsipfwlib' nsx-adf 4.0.1.1.0-8.0.20598730 VMware VMwareCertified 2023-01-31 nsx-cfgagent 4.0.1.1.0-8.0.20598730 VMware VMwareCertified 2023-01-31 nsx-context-mux 4.0.1.1.0-8.0.20598730 VMware VMwareCertified 2023-01-31 nsx-cpp-libs 4.0.1.1.0-8.0.20598730 VMware VMwareCertified 2023-01-31 nsx-esx-datapath 4.0.1.1.0-8.0.20598730 VMware VMwareCertified 2023-01-31 nsx-exporter 4.0.1.1.0-8.0.20598730 VMware VMwareCertified 2023-01-31 nsx-host 4.0.1.1.0-8.0.20598730 VMware VMwareCertified 2023-01-31 nsx-ids 4.0.1.1.0-8.0.20598730 VMware VMwareCertified 2023-01-31 nsx-monitoring 4.0.1.1.0-8.0.20598730 VMware VMwareCertified 2023-01-31 nsx-mpa 4.0.1.1.0-8.0.20598730 VMware VMwareCertified 2023-01-31 nsx-nestdb 4.0.1.1.0-8.0.20598730 VMware VMwareCertified 2023-01-31 nsx-netopa 4.0.1.1.0-8.0.20598730 VMware VMwareCertified 2023-01-31 nsx-opsagent 4.0.1.1.0-8.0.20598730 VMware VMwareCertified 2023-01-31 nsx-platform-client 4.0.1.1.0-8.0.20598730 VMware VMwareCertified 2023-01-31 nsx-proto2-libs 4.0.1.1.0-8.0.20598730 VMware VMwareCertified 2023-01-31 nsx-proxy 4.0.1.1.0-8.0.20598730 VMware VMwareCertified 2023-01-31 nsx-python-gevent 1.3.5.py38-19972216 VMware VMwareCertified 2023-01-31 nsx-python-greenlet 0.4.14.py38-19345965 VMware VMwareCertified 2023-01-31 nsx-python-logging 4.0.1.1.0-8.0.20598730 VMware VMwareCertified 2023-01-31 nsx-python-protobuf 2.6.1-19195979 VMware VMwareCertified 2023-01-31 nsx-python-utils 4.0.1.1.0-8.0.20598730 VMware VMwareCertified 2023-01-31 nsx-sfhc 4.0.1.1.0-8.0.20598730 VMware VMwareCertified 2023-01-31 nsx-shared-libs 4.0.1.1.0-8.0.20598730 VMware VMwareCertified 2023-01-31 nsx-snproxy 4.0.1.1.0-8.0.20598730 VMware VMwareCertified 2023-01-31 nsx-vdpi 4.0.1.1.0-8.0.20598730 VMware VMwareCertified 2023-01-31 nsxcli 4.0.1.1.0-8.0.20598730 VMware VMwareCertified 2023-01-31 vsipfwlib 4.0.1.1.0-8.0.20598730 VMware VMwareCertified 2023-01-31

Now remove the VIBs in the correct order from the node. The last VIB to be removed, “nsx-esx-datapath” cannot be removed if the N-VDS is still present on the node. Only for that VIB the “--no-live-install” switch must be added. Run the command for every VIB to be removed.

NSX-T 2.x de-install order

For NSX-T 2.4 and 2.5 the de-install list and order is:

[root@esxi-01a:~] esxcli software vib remove -n <vib name below> nsx-host nsx-adf nsx-exporter nsx-aggservice nsx-platform-client nsx-python-logging nsx-opsagent nsx-proxy nsx-nestdb nsx-sfhc nsx-context-mux nsx-python-protobuf nsx-python-greenlet nsx-python-gevent nsxcli nsx-netopa (2.5 only) nsx-netcpa nsx-profiling-libs nsx-mpa nsx-vdpi nsx-nestdb-libs nsx-rpc-libs nsx-metrics-libs nsx-upm-libs nsx-common-libs nsx-shared-libs nsx-cli-libs nsx-esx-datapath --no-live-install

NSX-T 3.x de-install order

For NSX-T 3.x the de-install list and order is different:

root@esxi-01a:~] esxcli software vib remove -n <vib name below> nsx-host nsx-adf nsx-exporter nsx-context-mux nsx-platform-client nsx-opsagent nsx-proxy nsx-sfhc nsx-netopa nsxcli nsx-nestdb nsx-cfgagent nsx-mpa nsx-vdpi nsx-ids (nsx-idps in 3.0) nsx-monitoring nsx-python-logging nsx-python-protobuf nsx-python-greenlet nsx-python-gevent nsx-python-utils nsx-cpp-libs nsx-esx-datapath --no-live-install vsipfwlib nsx-proto2-libs nsx-shared-libs

NSX-T 4.x de-install order

root@esxi-01a:~] esxcli software vib remove -n <vib name below> nsx-host nsx-adf nsx-exporter nsx-context-mux nsx-platform-client nsx-opsagent nsx-proxy nsx-sfhc nsx-netopa nsxcli nsx-snproxy (new in 4.x) nsx-nestdb nsx-cfgagent nsx-mpa nsx-vdpi nsx-ids nsx-monitoring nsx-python-logging nsx-python-protobuf nsx-python-greenlet nsx-python-gevent nsx-python-utils nsx-cpp-libs nsx-esx-datapath --no-live-install vsipfwlib nsx-proto2-libs nsx-shared-libs

Without the “–no-live-install” switch an error is thrown if a “opaque” portgroup connected to a N-VDS is present on the node.

To conclude

Hopefully this post shows you which options are available to remediate ESXi based Transport Nodes back to a working state when things go bad. This post is not intended to troubleshoot or fix TN related issues, but more how to be able to clean it up and start with a fresh NSX-T config and VIBs without re-installing the node.

To wrap it all up, I would like to thank to Manny and Wesley, who are the writers of the articles I based mine upon. Lastly beware of the consequences performing one on the above options in a live environment. Contact VMware support if your are not sure what the impact may be.

Useful links

Blogs

Uninstall sequence NSX-T VIBs (2.2)

NSX-T Error – Failed to uninstall the software on host. MPA not working. Host is disconnected (2.4)

4 Comments

Jurgen Mutzberg · October 16, 2020 at 10:55 am

Hi Daniel,

this is an EXCELLENT post!

It saved me hours to try to remove a faulty ESXi node from my NSX-T setup.

Really very well written post.

Thanks,

Jurgen

Daniël Zuthof · October 22, 2020 at 10:24 pm

Hi Jurgen. It makes me happy to read my post is very useful to you. Thanks for your kind reaction.

Calvin Wong · July 20, 2021 at 5:30 am

Good one !! the buggy NSX-T needs more nice doc like what you’ve written!

😀

Daniël Zuthof · August 4, 2021 at 11:06 am

Thanks for your kind feedback, Calvin.